- |

- |

There’s an old joke, a kind of moral story, that begins with a man outside on his hands and knees searching for his keys.

In some versions of the story he’s drunk.

In all of them, it’s night time and he’s illuminated by the glow from a street lamp.

A police officer comes along and notices that the search appears to be fruitless. “Why are you searching under the streetlight?” the officer asks, “Are you sure you dropped them around here?”

“Probably not,” the man replies,”but the light is better here.”

As with most fables, it’s exaggerated to the point of sounding incredible. But what it’s describing – the tendency to keep our searches convenient and comfortable, even if it’s clearly not in our best interests – is highly relatable.

Who hasn’t bought what turned out to be an inferior product because it was the first one we saw in the shop? Or the one we best remember from the ads? Or because a friend with completely different tastes to us recommended it? We can all identify with the regret that comes upon realising, “Oh no. I should have done some more research.”

The streetlight effect is a cognitive bias, an error in thinking that can lead us to make incorrect or irrational decisions. And it affects all of us in all sorts of different areas of our lives, including in our work.

In this article, we’ll look at how it relates to a very particular kind of search: the investigation and analysis of data.

How can the streetlight effect skew your data analysis?

We analyse data to help us make better decisions. Better marketing decisions, for example.

Logically then, the better the data, and the better the analysis, the better the decisions we end up making.

But the streetlight effect can drastically reduce the quality of data collection and assessment.

How?

Before we get to that, it’s important to point out that the effect as it relates to data covers two similar, but not identical, biases. As Jeff Haden says in this article, it isn’t just about using the information that’s “easiest to find”, but also the data that’s easiest to “stand behind”.

The effect is about illogically minimising effort, and we’re talking about both mental and emotional effort.

We use the word “illogical” because the streetlight effect may save us time or inconvenience, but it may also render our search inadequate.

An arguably reductive articulation of the much-cited Occam’s Razor says that “the simplest explanation is preferable”. But simplicity is very different to convenience.

And “simplest” is relative.

To quote another great philosopher (Sherlock Holmes): “When you have eliminated the impossible, whatever remains, however improbable, must be the truth.”

The streetlight effect has the potential to decrease the value of our data by encouraging us to ignore the improbable, the unusual, the unconventional… the difficult.

To put it simply, if you’re only looking under the streetlight, are you really getting what you need from your data?

Perfect data? There’s no such thing.

So, how do we think beyond the streetlight and search for the perfect set of data?

We don’t.

It’s vanishingly rare to have perfect information available to us in any part of our lives. When it comes to data we use for marketing – website user data for example – there’s really no such thing.

And that’s completely OK.

We humans are mostly pretty good at making decisions that aren’t immaculately rational, but which maximise utility or satisfaction. This is known as “satisficing”, a term coined by Nobel Laureate, Herbert A. Simon, in the mid 1950s.

In data analysis there’s almost never a need to create a pristine data picture. Instead, we as marketers can “satisfice”. We can be comfortable with “good enough”.

There is, however, a distinct difference between a “good enough” data set and a data set skewed by the fact it’s taken entirely from a convenient or obvious location.

Yes, it’s true that in some cases we might get what we need from looking under the streetlight. But in others – in most cases, really – when we keep the scope of our search narrow, we’re consigning ourselves to a data picture that is mediocre. Or even misleading.

It’s a trap! But we keep falling in.

Why do we keep being swallowed by this trap? Because it’s human nature.

Many cognitive biases are exacerbated or made possible by other cognitive biases. The streetlight effect is one.

Think of confirmation bias, the tendency to look for data that supports our hypothesis, assumptions, or prejudices. It absolutely encourages us to keep looking under the streetlight.

And when you combine confirmation bias with the illusion of significance – the tendency to overestimate the importance of our own actions or decisions – you can see how we’re only more likely to opt for what’s comfortable and flattering, rather than what’s true.

Then there’s the status quo bias, under the influence of which we might ignore potential alternatives to a default setting or process. Client-side analytics is a good example of that. For many organisation, the term isn’t even on the radar, partly because the fact that there’s another option is simply not known. But there is, as Animals Australia have shown. And after considering and implementing a different option in the area of data analytics, they’re now enjoying all sorts of advantages.

And the list goes on…

The cognitive bias trap is often so difficult to see, but it’s so easy to succumb to.

The good news is that falling in is common, but it’s not inevitable.

So what can we do to avoid the streetlight effect?

Tips to help you turn your back on your streetlight search.

There are lots of ways to minimise the influence of the streetlight effect. Below are four of the most important related to data collection and analysis:

Be conscious of your biases

The first thing to do as you start thinking about moving away from what’s easy and convenient relates directly to what we’ve just talked about: becoming cognisant of your biases.

It relates to all the other tips below, as well.

You can’t eliminate all biases, but simply by recognising that you have them, you’re working towards a better data set. And a better way of assessing that data.

Beware vanity metrics

A vanity metric is a figure that looks “high” or “good” on paper but which doesn’t actually measure anything of use. Either that or it’s downright deceptive.

You can drive a million people to your website, but if no one is converting, that number is meaningless.

Let’s use bounce rate as a more specific example.

To some extent, the enthusiasm for quoting bounce rates is a classic example of the streetlight effect. It was a stat in default reports within Google’s Universal Analytics platform for many years. Because of its visibility and how easy it was to monitor, people assumed it was important and meaningful.

But that’s true only in very particular situations and for very particular kinds of marketing teams. Bounce rate is not the kind of headline metric every organisation should concentrate on.

In truth, it’s slowly starting to lose its shine, but it continues to get used, and incredibly low bounce rates continue to be employed as solid evidence of incredible website content. They almost never are.

(In the newest version of Google Analytics, colloquially known as GA4, bounce rate is not front and centre – and that’s good news. The focus is much more on engagement rate: what percentage of users took an action on the page that would indicate they weren’t quickly leaving without reading.)

Don’t confuse causation and correlation.

Be careful about misunderstanding what causes something else.

A ‘streetlight’ analysis of data on ice cream consumption and shark attacks, leads us to a sensational potential conclusion: sharks are attracted to swimmers who’ve just eaten an ice cream!

Do we need to reconsider our love affair with Golden Gaytimes?

No. They’re delicious and don’t increase your likelihood of getting bitten by a Great White in any way. A deeper look at the data says ice-cream sales and shark attacks rise and fall at the same time due mostly to seasonal factors. When it’s warm and we’re tempted by a cool treat, there also happen to be more sharks seeking food near where we swim.

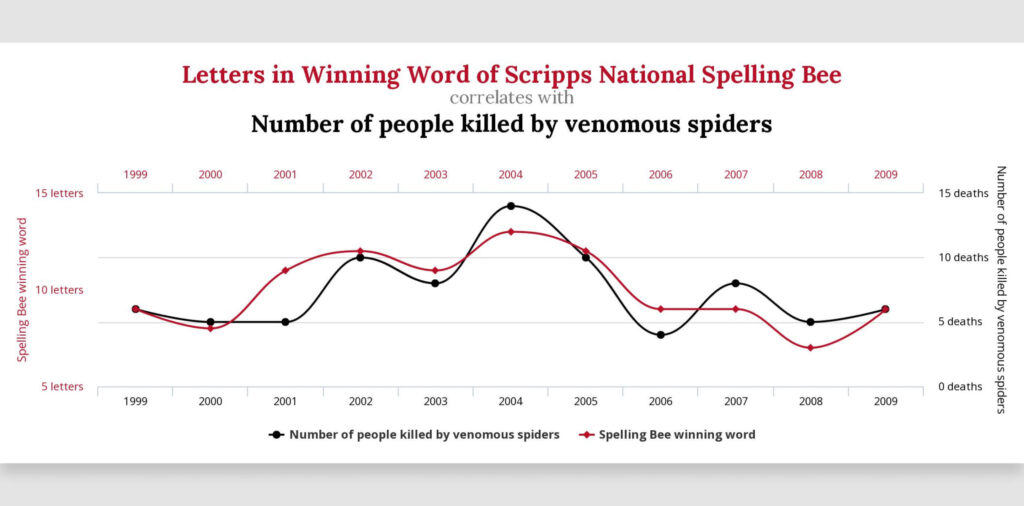

Another, similarly grisly example is this correlation between the length of winning words in a popular American spelling bee and the number of people killed by spiders.

Coincidence?

Yes. Total coincidence.

This is one of numerous instances of correlation clearly not having anything to do with causation discovered by Harvard Law School student, Tyler Vigen.

Now, these are hyperbolic examples. In the world of your work as a marketer, where there are probably no sharks, spiders, or spelling bees, the spuriousness of the connection between two sets of data may not be so obvious.

If you push up traffic to the website, for instance, there’s a good chance you’ll observe a decrease in engagement or conversion rate. There’s a correlation there, but it doesn’t definitively tell you that the campaign you’re running is failing. You need to dig deeper, and consider the nature of the audience, their context, and various other factors before drawing your conclusions.

Be vigilant.

Start with a question.

Google Analytics, the most popular analytics platform in the world, makes it feel easy to jump in and start crunching numbers. We assume that Google will do it for us. That we can peruse a report and find something meaningful without too much deliberation before hand. Unless you have an incredibly good eye for anomalies, this rarely works.

Without an intention it’s easy to get nowhere fast.

So don’t begin your analysis with a desire to find something… anything. Start with a question and use the data to answer it.

Be scientific.

So that’s four of many ways to avoid the streetlight effect. If we had to sum them all up into one overarching piece of advice it would be this:

In the same way there’s no “perfect” set of data, there’s no “perfect” source of data or analysis method. But there is an approach to both tasks that will leave you in good stead. And that’s to think like a scientist.

By that we don’t mean simply being inquisitive or rigorous (although these are both really important). We mean putting your theories to the test without fear or favour.

You should approach your data analysis with a complete openness to having your hypothesis disproved, your preconceptions obliterated.

Search for data and then process it outside the light and let the facts fall as they may.

It’s not easy. Outside science, having your assumption or idea invalidated can be deflating.

When you shift your thinking, though, and be scientific, that invalidation can be stimulating. Even exhilarating. Why? Because it almost certainly leads you closer to the truth. Or at least a better understanding of what you’re interested in, whether that’s the the location of your keys or the behaviour of your website visitors.

More Articles

Up for some more?

Get your monthly fix of August happenings and our curated Super8 delivered straight to your inbox.

Thanks for signing up.

Stay tuned, the next one isn't far away.

Return to the blog.